When we do concurrent (or thread or process based) programming [1], we know that there is a scheduler [2] below the code. It does a no-parameter call to schedule a process, on some criteria. (My notes [14] and [15] may be some background here.)

The other day I discovered something interesting: a scheduler is not as transparent as I have thought!

I had thought that the code in my processes would look the same, independent of the capabilities of the scheduler. Or the other way around, that when I code, the software pattern I end up with, would be the same, independent of the scheduler. (cont. right)

When I once was introduced to an asynchronous runtime system in ANSI C, with source code available and all, I studied the scheduler and saw that it could be used to build a synchronous communication layer with channels, CSP / occam style on top of it. See ERCIM-2005, CPA-2006 and CPA-2007 papers about this [3].

The coding pattern used together with the scheduler was exactly the same as I was accustomed to - with SPoC (Southampton Portable occam Compiler), which translated occam to C, in the late nineties. Nice, beautiful state machines! Where I was used to CSP style programming, SDL (Specification and Description Language) was used, borrowed from telecomm - where data packets need to be sent between processes.

A producer is well behaved and about as fast as a consumer process. So, critical system buffer overflow was avoided by tuning towards expected max throughput. The pattern was FSM (Finite State Machines) used for modeling and coding. Messages arriving into a process which was in such a state that handling the message was not possible, were handled partly by design (by knowing how the other process worked), by tuning, by timeout and resending or by setting that message aside for later internal process work-scheduling.

With synchronous designs these facets will have been "factorized away". However, deadlock may be introduced, if a safe coding pattern and strict adherence to interprocess contracts are not respected. (cont. down left)

(cont. from above right) What tricked me was that I thought the state machine pattern could "best" be used in any context, not thinking about the scheduler below. And I had seen that the asynchronous messages could nicely be sent and received within the same state machine (send, send, receive, then new state).

So, when we built the CHAN_CSP layer we let each channel transition be a state in the state machine (*1). As a "first effect" (not side effect), distinctive application states would be part of the same state name space (part of the same switch/case).

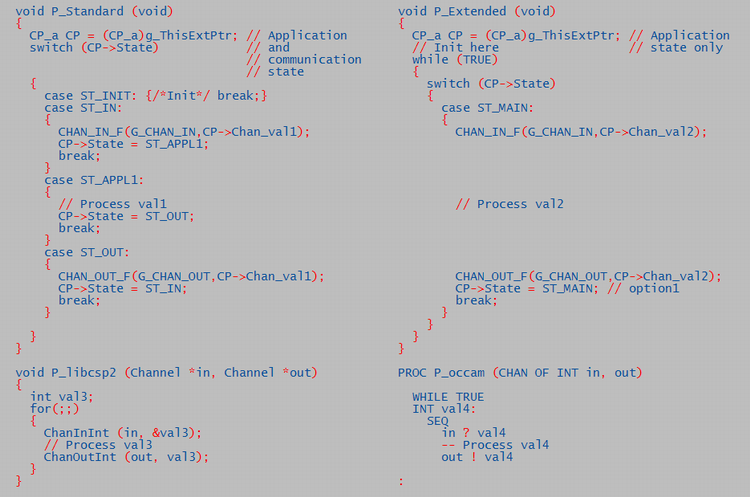

See process P_Standard above. It blocks for CHAN_IN, processes val1 and replies to CHAN_OUT, in three states. Processing could be put into ST_OUT, before CHAN_OUT, saving a state. But readability is probably better with three states.

I did not realize that while I solved it like this, the scheduler had been built for asynchronous systems, where a blocking state does not exist.

In the channel system, the first process on a channel blocks (by returning from the process, and not being scheduled again before the second contender on the channel, or timeout, sends a an empty "ready" message to the first) (*2). The blocking concept has no busy poll. This was well solved with the present scheduler. But, the well behaved "composite" hierarchical sub state machine (for channel states) could be seen as logical noise. Some could even think the whole concept as more complex.

But, then I realized that if the scheduler had an optional way to work, all the channel states would be factorized away!

The scheduler could reschedule to next code line after a return, or deschedule, to it. Without becoming a so called preemptive scheduler. On scheduling again to the next line (not to the standard scheduler's process function's first line address), the scheduler would also need to restore local process context CP.

Stack should be handled, if we allowed local process variables outside of CP->... The code would be like P_Extended above. There, val2 is processed with no distinct state. More like standard no-state programming.

This may be one step ahead and one back, depending on taste (pun). For, maybe we should have a separate state to process val2? However, maybe it's good to get rid of the channel states? At least if you don't believe in mind clogging sub-state machines or think that different state machines should be in separate switch / cases.

But see the simplicity of the public libcsp2 by Beton & Sputh. It may run on top of linux.

And last: the sheer beauty of occam,

appreciating that a PROC, CHAN

and ALT are first rate citizens of the

language - and that their usage rules are enforced by the compiler. But

alas, for none to use.